Projects on Data Analytics Using R

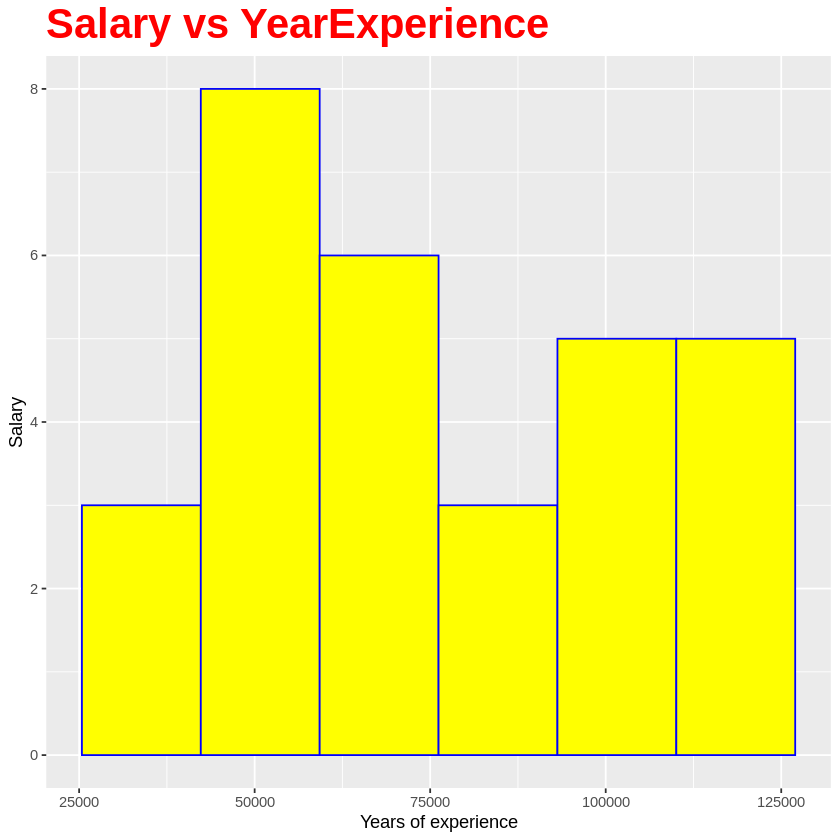

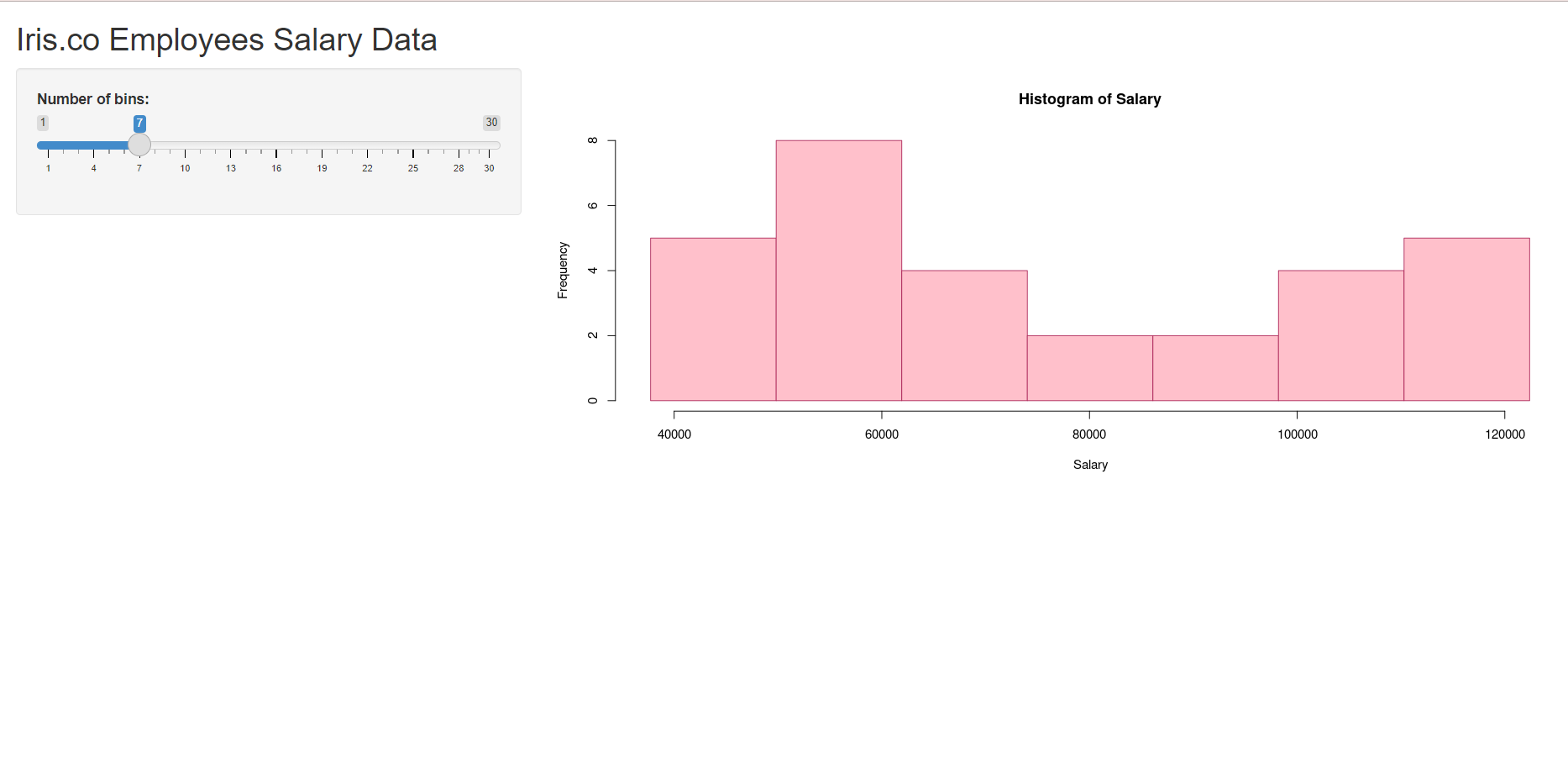

Hi, we are from Iris Group. Our dataset is about the salary of 30 employees in our company. After analyzing and exploring the data, we used a histogram to visualize the data. For the ML algorithm, we chose Simple Linear Regression as our data only consist of 1 input, 1 output, and both column in continuous numerical data.

To view the histogram:

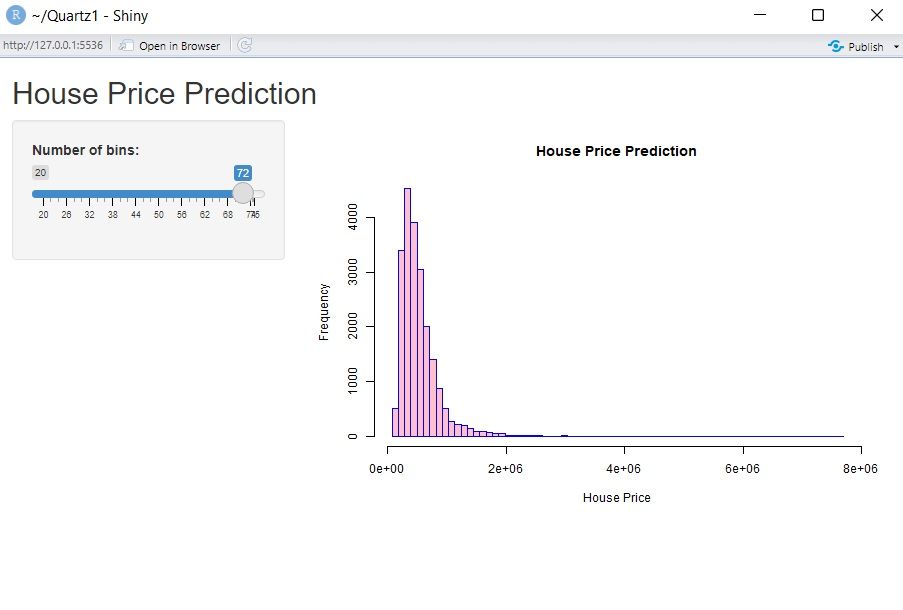

To view the RShiny App:

Group members:

Noor Anis Nabilla Binti Ismail

Nursyafiqah Sharmeen Binti Hussin

Ummilia Balqis Binti Harun

Thank you for teaching us, Dr.Sara. We really enjoyed the course and learned a lot of new knowledge!

Group: Pfyzer

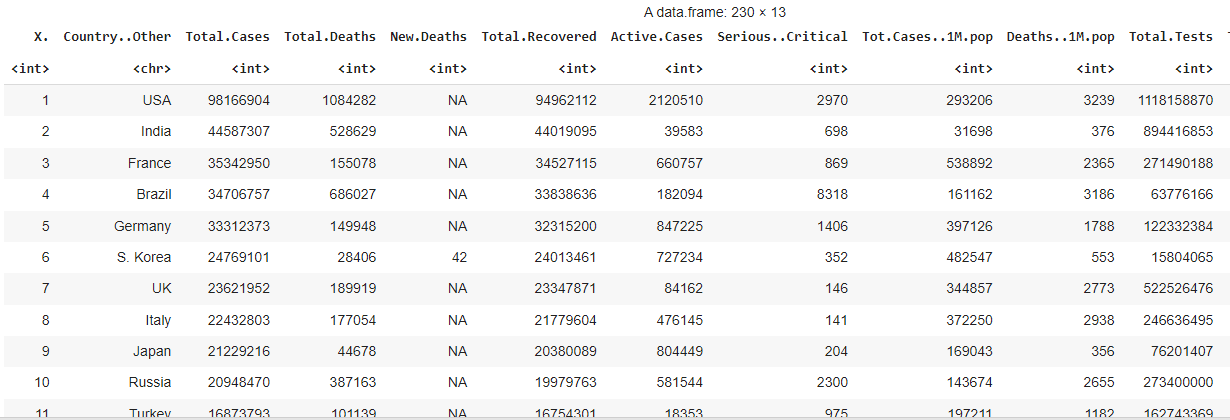

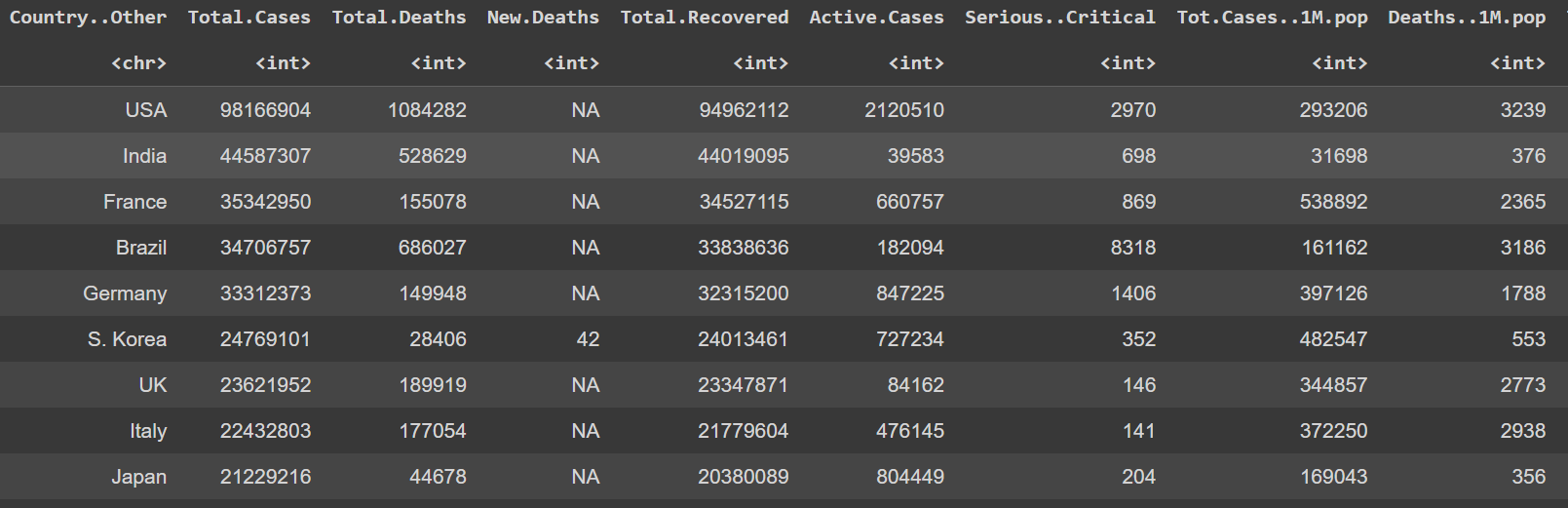

Our project title was "Covid-19 Total Case Prediction." The objective of our study is to analyze the total cases of Covid-19 from total death, total recovered and active cases all the countries, so that we can predict Covid-19 cases in the future. Our data consist 230 rows, and 13 columns. Below shows the data frame for the study.

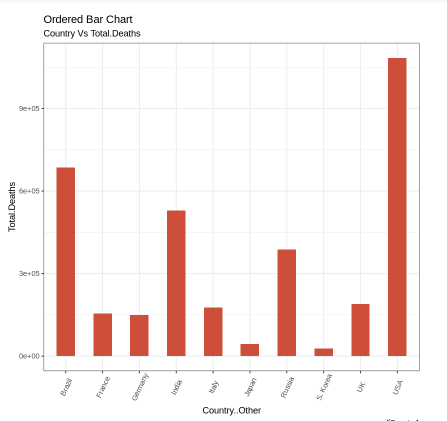

We visualize the data using ggplot. For example, we use ordered bar chart to analyze the relationship between country and total deaths. Below shows a few countries with their death cases.

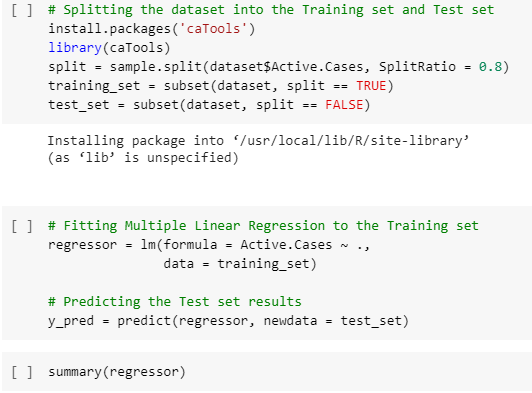

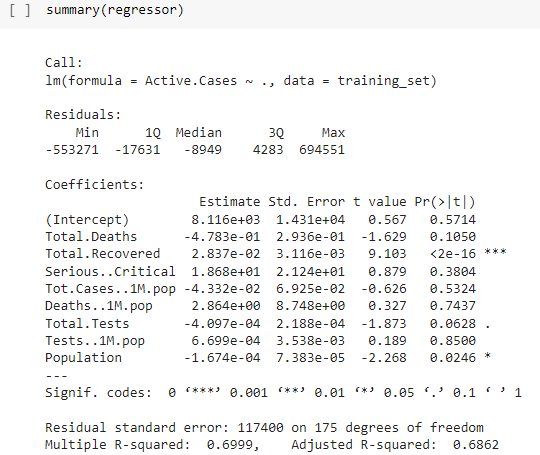

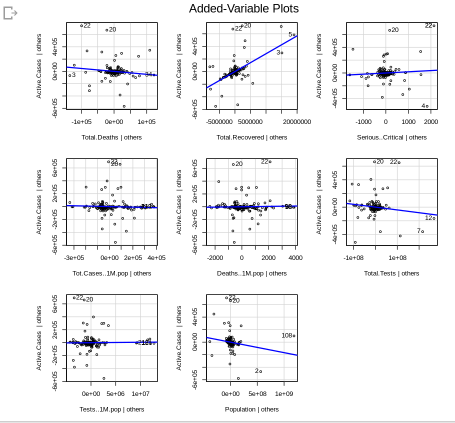

For Machine Learning (ML) algorithm, we use Multiple Line Regression because has more than one numerical input.

That's all I can share. My friends and I really enjoy joined this programme because we were trained by the professional trainees. Thank you so much Dr. Sara! Stay safe always

Hi! We are from Pfyzer Group. Our findings on analyzing the various real time Datasets of Covid-19 Live cases.

- Our data is about the prediction of Covid-19 total cases. The total cases of Covid-19 including the active cases, total death and total recovered all around the world. As following below shown the data frame of 230 rows and 12 columns:

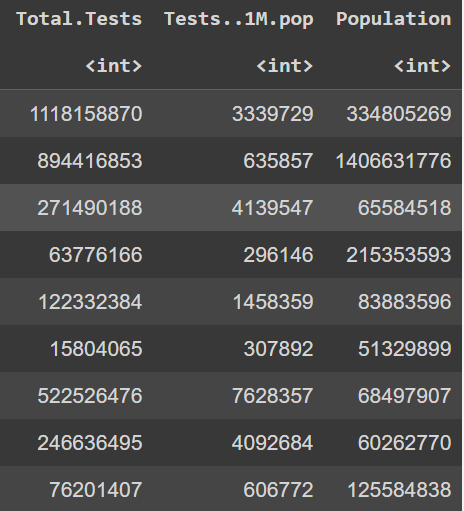

- We visualize our data by using Box plot GG plot such as Bar Chart, Lollipop Chart, Line Plot and Scatter Plot. Below is one of the example for the chart:

Lollipop Chart

Observation: The United States of America (USA) has the highest total recovered of Covid-19 cases among the top ten countries analysed.

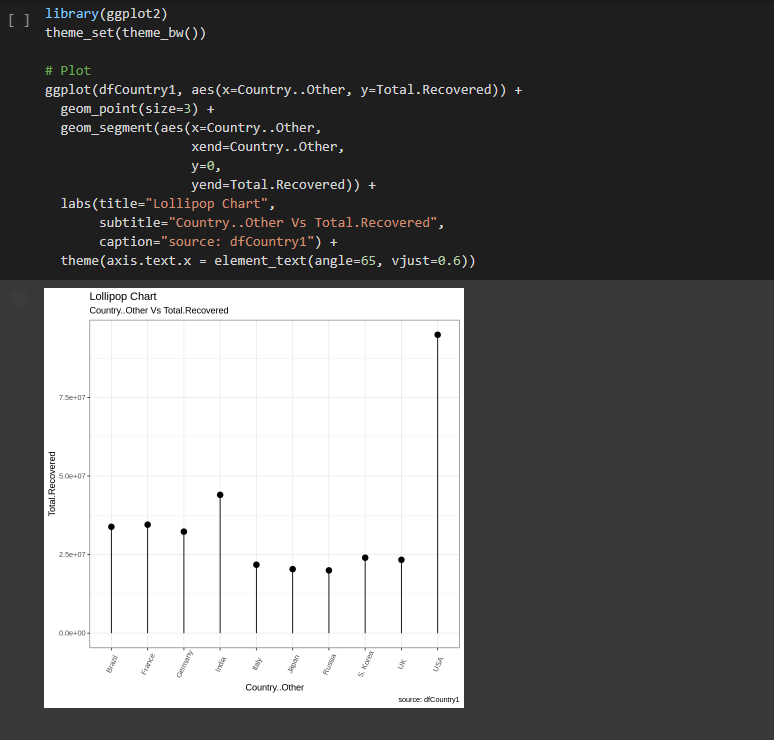

- Machine Learning algorithm that we used for analyzing our data is Multiple Linear Regression. The reason why we used the Multiple Linear Regression is because the data are more than one independent variable and one dependent variable are present. Simply we can say that when the data frame have more than one input and has one output of numerical data. Visualize the results is shown below:

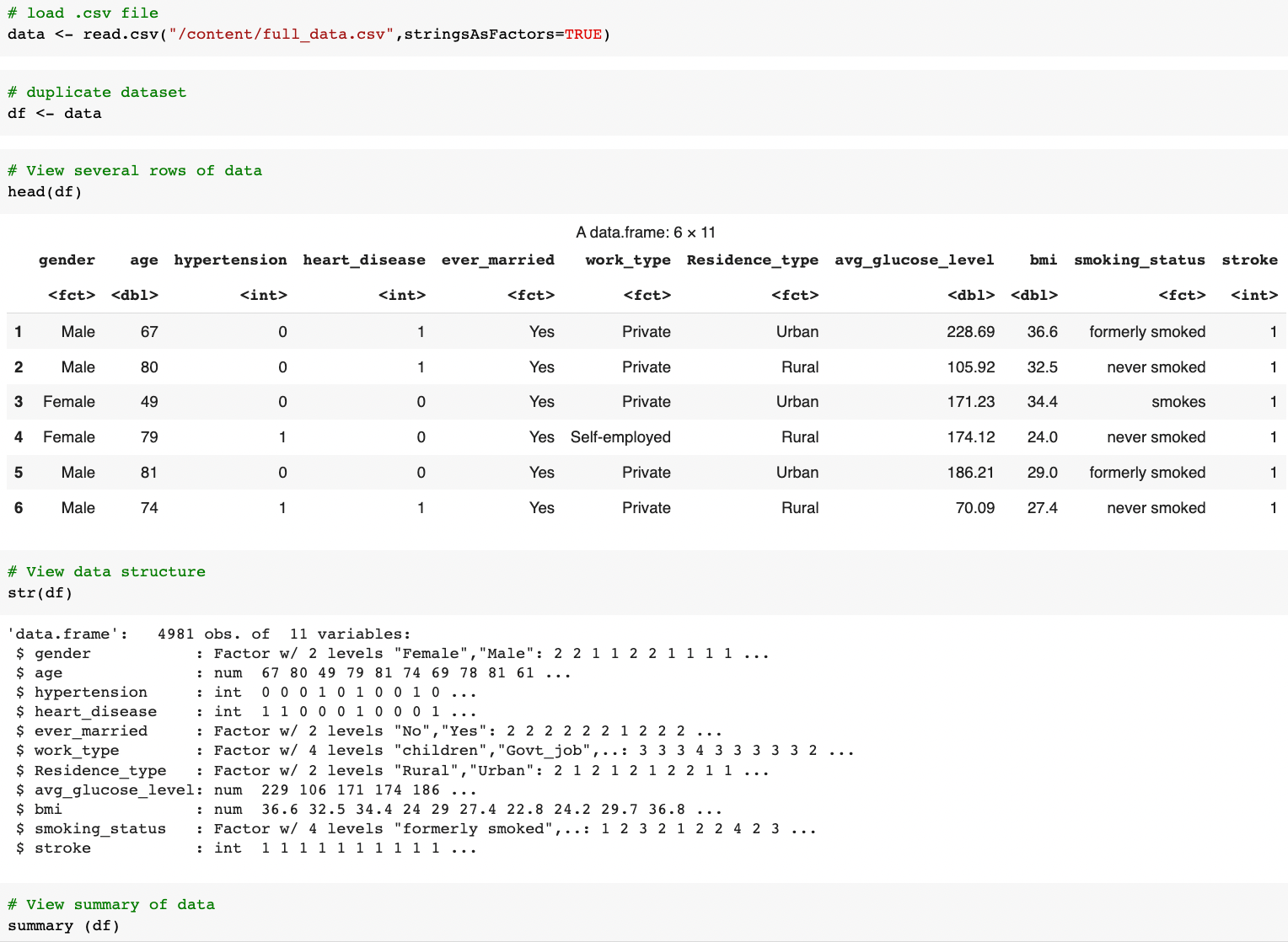

Dataset : full_data.csv

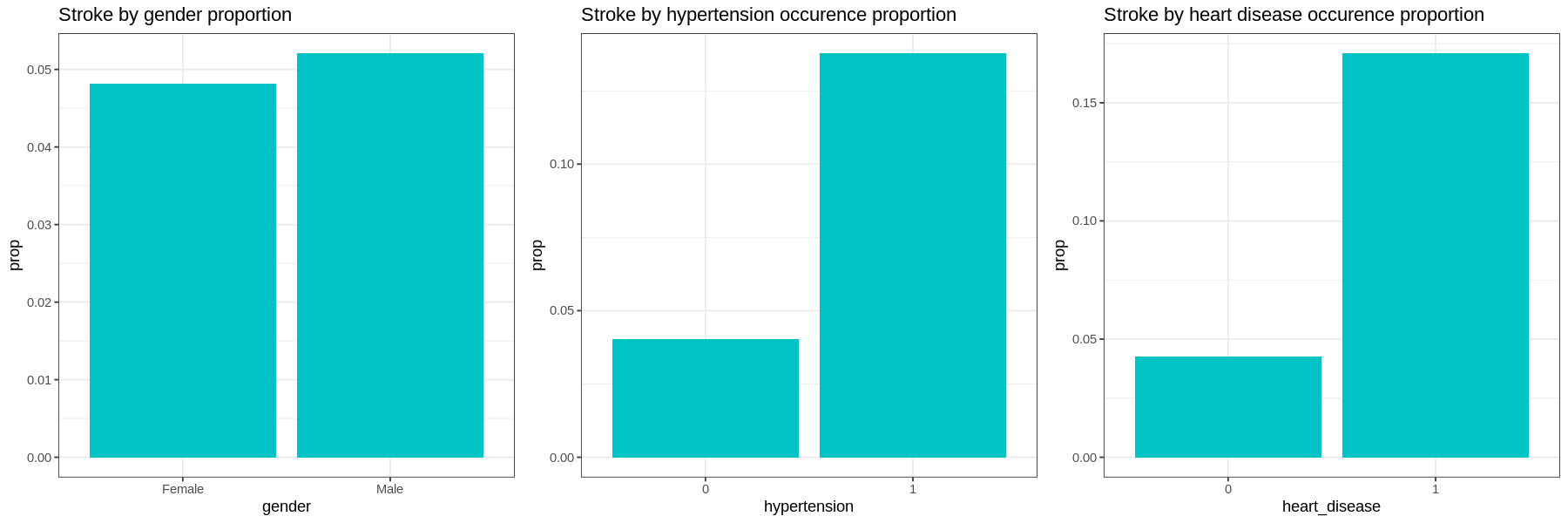

1. Understanding the data

As no description was provided following the dataset, we begin with establishing our general understanding about the dataset and identifying the input and output variables:-

We pay attention to:

-

number of columns and the names -> what the data is about and how many variables we going to analyze (prep our mind with the degree of complexity we are going to handle 🥶.)

-

data type of each columns -> which columns should be the input variables, and which should be the target output. Also give an idea about what kind of prediction should be performed..is that regression or classification problem?

-

number of rows -> how many samples we had

From this undertaking:

We learned that this dataset has 4981 rows and 11 columns labeled as

gender, age, hypertension,heart_disease, ever_married, work_type, Residence_type, avg_glucose_level, bmi, smoking_status, stroke.

-

Thus, we assumed this dataset was from a 'health' sector and was sampled from 4981 patients.

-

We deduced that this dataset collects 10 parameters (input variables) on patients with and without stroke (1 output variable or target).

-

As such, we going to do a stroke prediction based on the classification problem.

2. Data Cleaning

At this stage, we aimed to ensure our data is consistent across the dataset:

-

no missing data

-

appropriate data type and values (E.g: age, avg_glucose_level,bmi shouldn't have negative values )

-

consistent format (E.g: gender should be either 'Male' or 'Female' only, not a mixture of other representations 'F', 'male', etc)

self-explain label -

relevant (no unique values like ID number, names, etc)

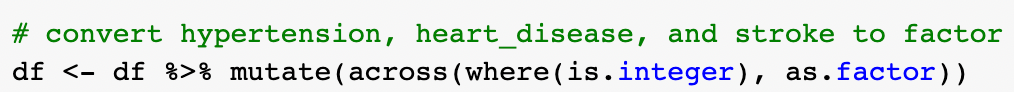

The given dataset is actually pretty clean and met the above-mentioned criteria. The only amendment we did was changing the data type of hypertension, heart_disease, and stroke from integer to factor as these variables tell whether the patient has the illness or not (yes/no).

3. Exploratory Data Analysis

This stage aims to provide the descriptive (what happened) and diagnostic (why it happened) analysis. We approach the EDA by categorical data, numerical data, and combination of both to understand the relationship:

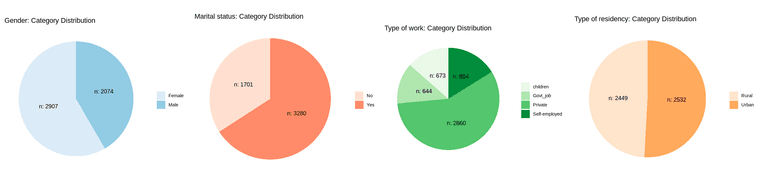

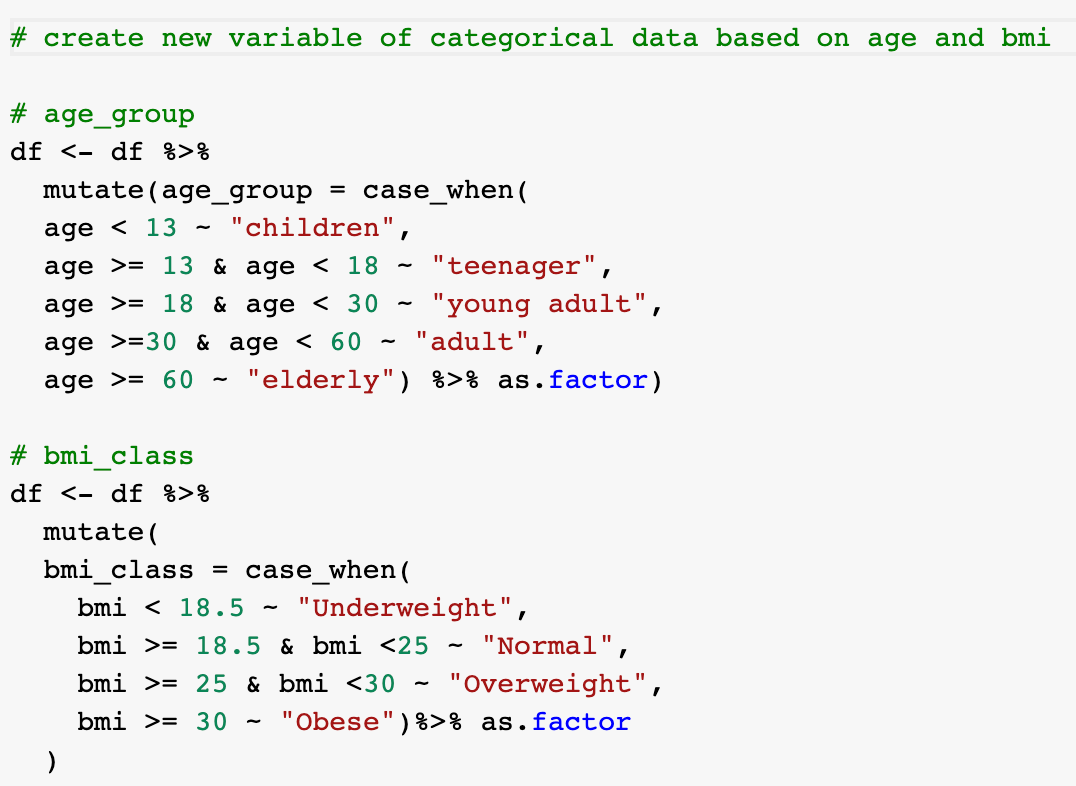

Categorical data

Distribution of samples by each category:

-

most of the sampled patients are married, working at private sector, has neither hypertension nor heart disease.

-

the sampled patients are almost equally distributed by gender(male or female) and type of residence (rural or urban)

-

majority of the sampled patients are non-smokers (either never smoked or formerly smoked).

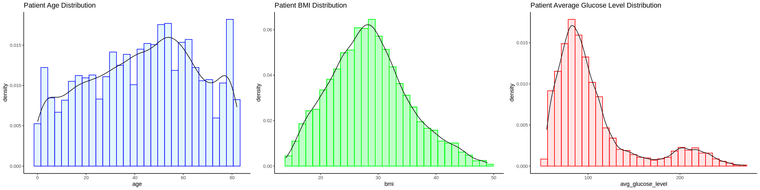

Numerical data

Range of each variable:

-

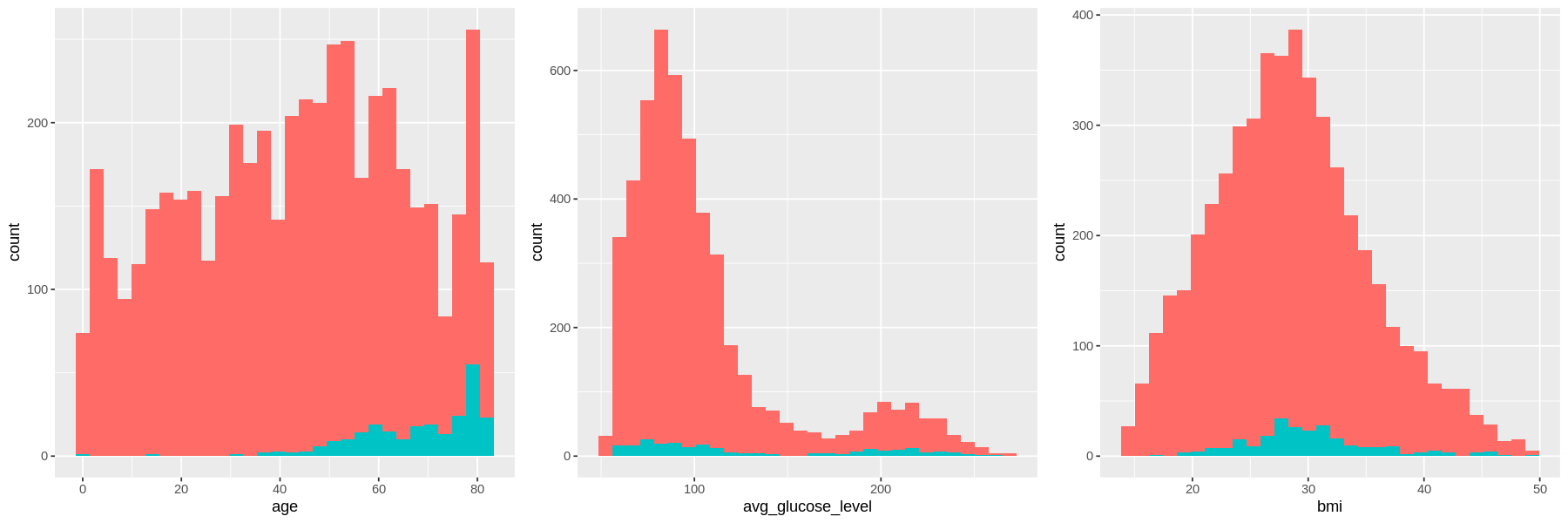

The age of sampled population of patients are 0<aged< 85, with most are between 40-60 and ~ 80 years old.

-

The sampled patient bmi are normally distributed with center ~30 (most of the patients have weight issue).

-

The glucose level skewed to the right with obvious 2 peaks at ~100 and ~200 glucose level (most of the patients have high blood sugar).

Understanding the relationship

Graphs show the number of patient with and without stroke for each factor.

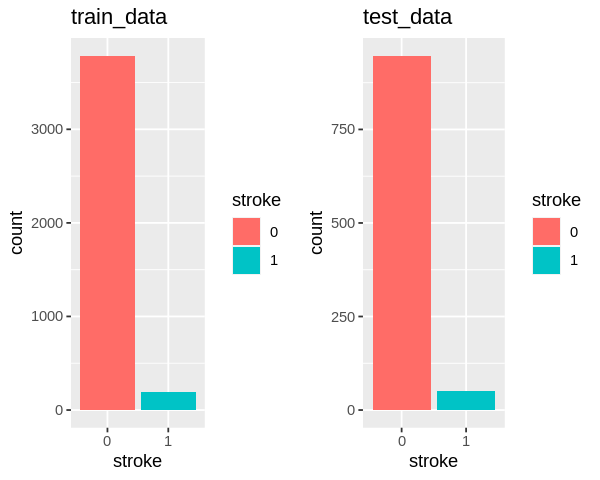

Here we can see the amount of those who have had a stroke is a small portion as compared to those without stroke. In other words, we have imbalanced data with a significant lack of data for patients with stroke.

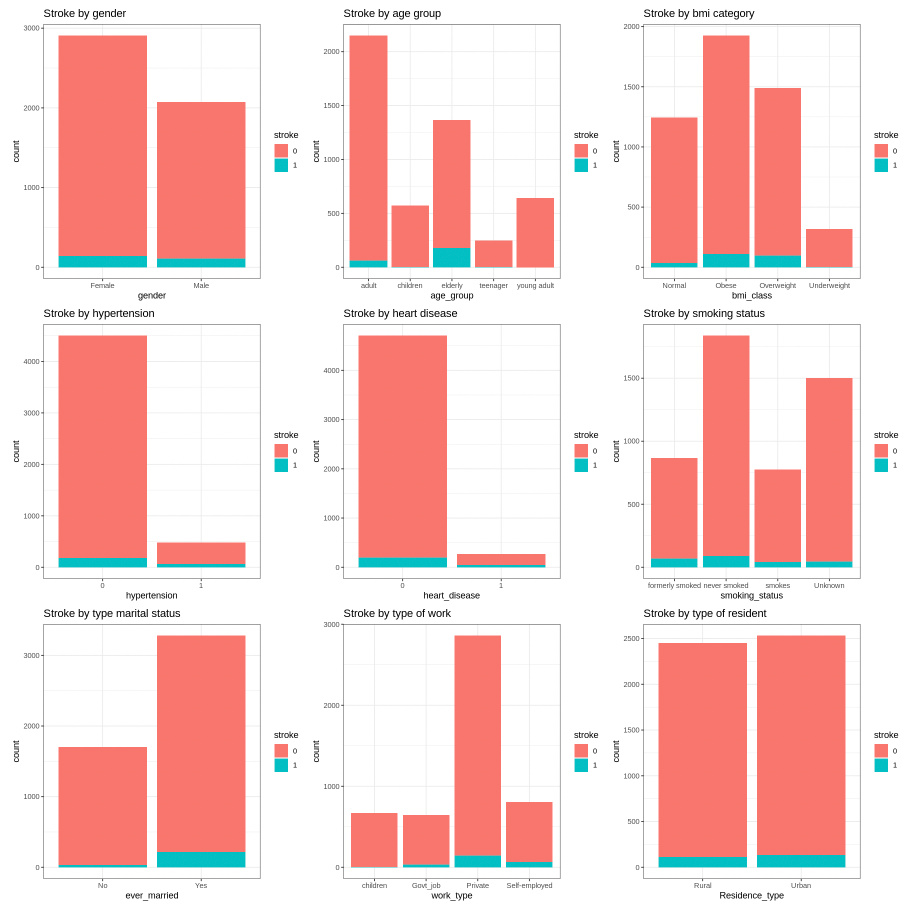

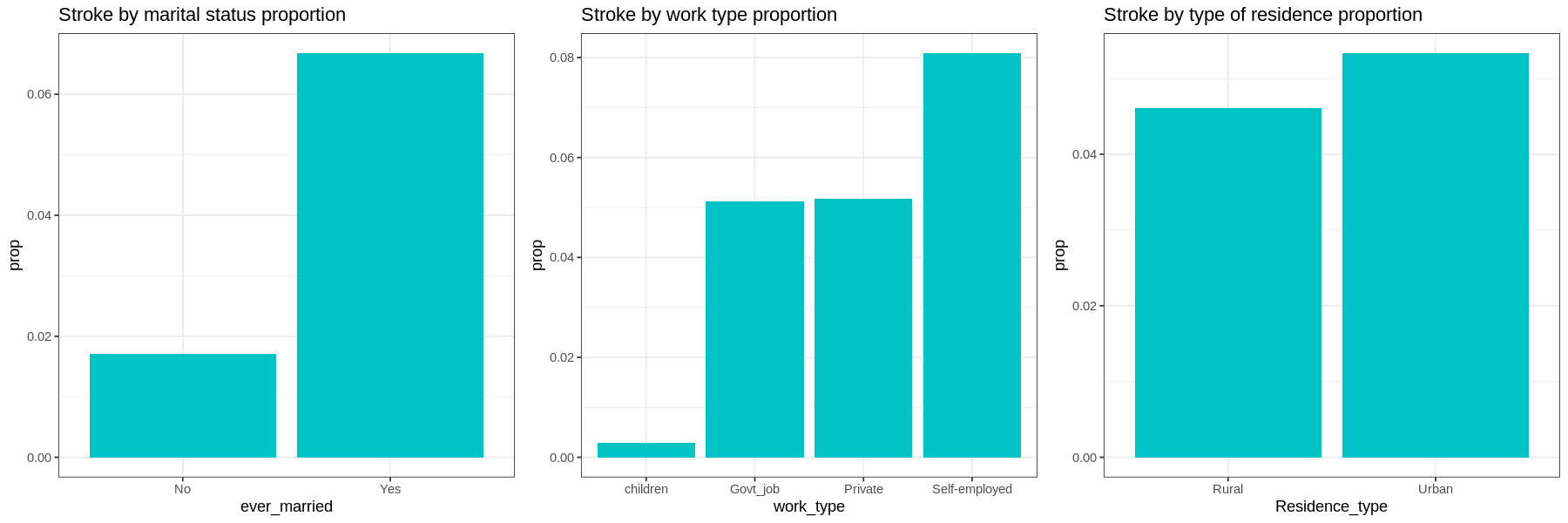

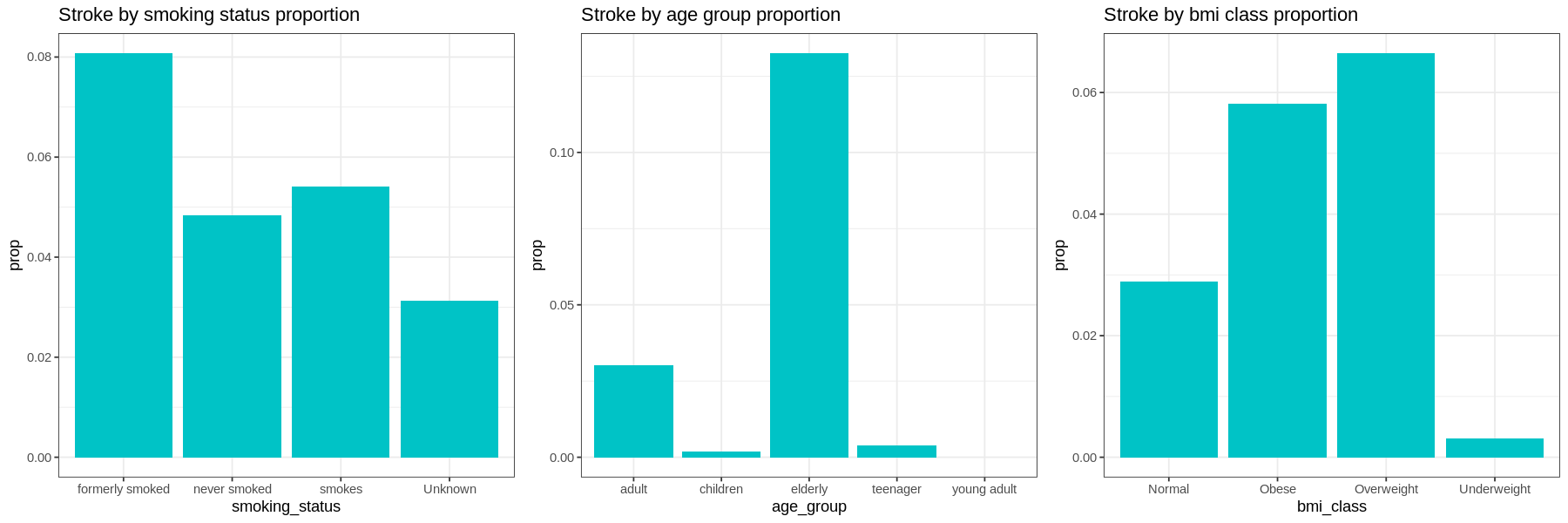

Graphs show the proportion of each factor for patients with stroke:

-

Gender and residence type does not appear to have much difference in occurrences of strokes.

-

Those with hypertension, heart disease, or those who have been married have a much higher proportion of their populations having had a stroke.

-

In terms of work type, Children have very low occurrences of strokes. There is little difference in the proportions of those who work in government and those who work in the private sector. Self-employed have a higher proportion of having strokes than other sectors.

-

Current smokers have a higher proportion of their population having had a stroke than those who have never smoked. Former smokers have a higher occurrence of strokes than current smokers. Those with unknown smoking have a low occurrence of strokes.

-

Elderly at age group has higher proportion of having stroke, followed by adult group. Interestingly, occurence of stroke in children is higher than young adult.

-

The proportion of stroke patients that are overweight and obese are high as compared to other bmi class with overweight slightly higher than obese patient. Those who have strokes very seldom are underweight.

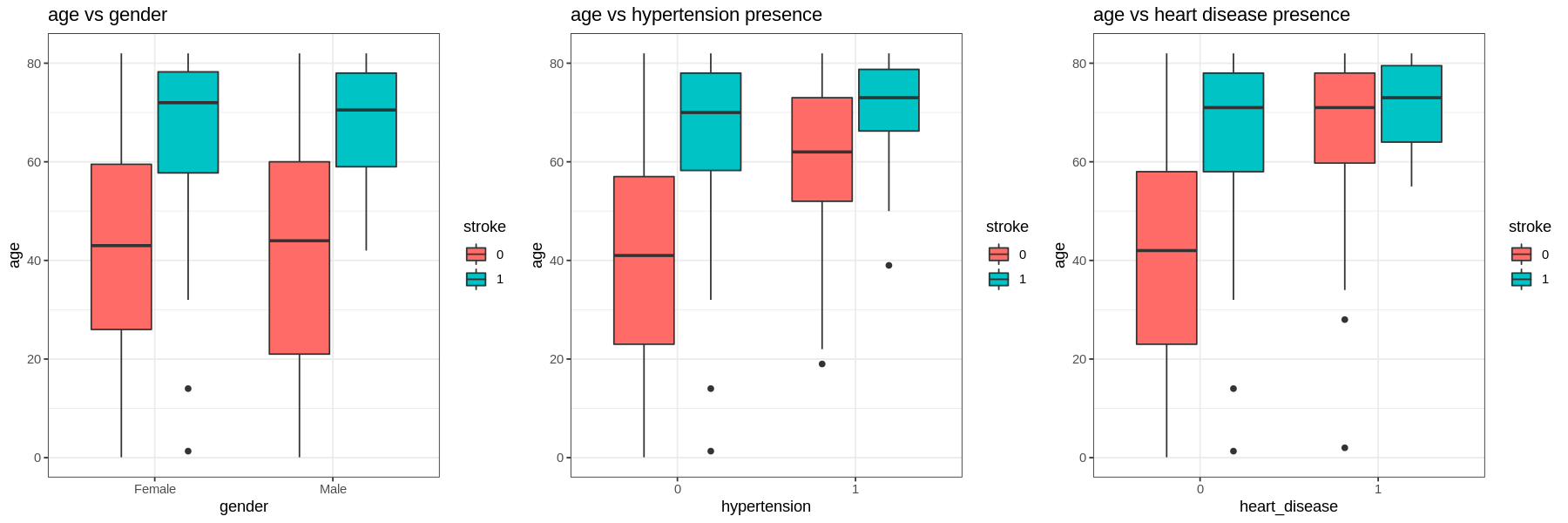

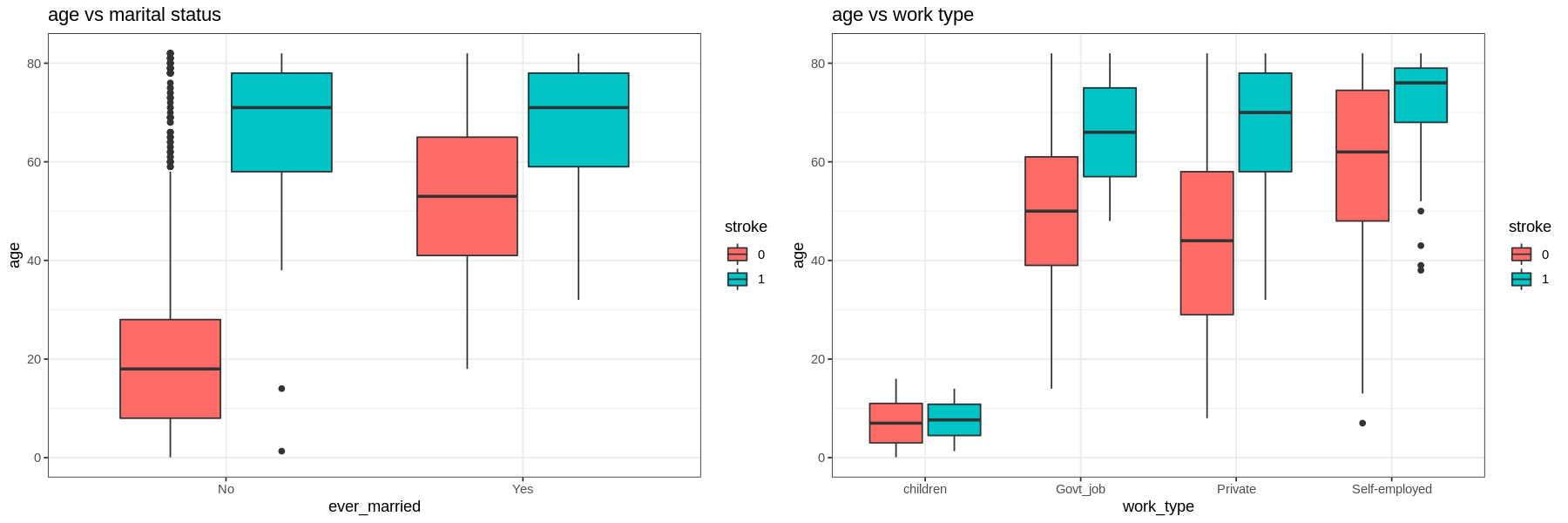

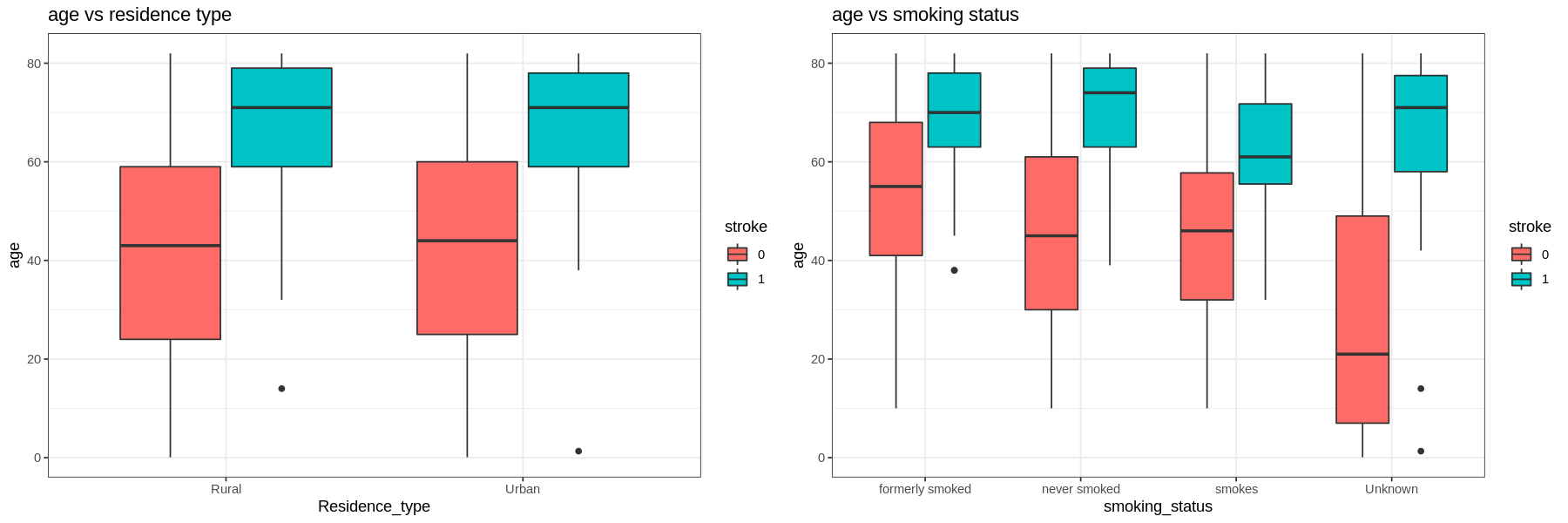

Next, we analyze each factor by age, average glucose level, and bmi.

-

For all levels in each factor those who have had a stroke are older.

-

Those with hypertension and heart disease are older than those who do not. Self-employed are also older than the other types of work.

-

Those who had a stroke and smoke are younger than those who quit or never smoked (but still had a stroke).

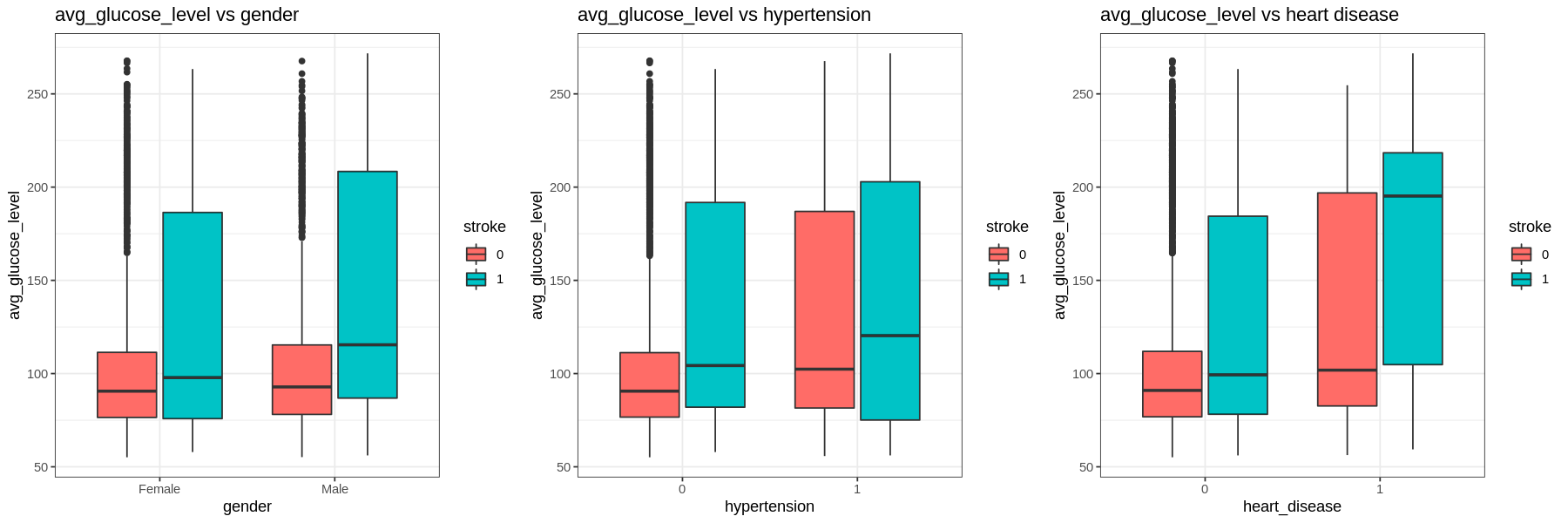

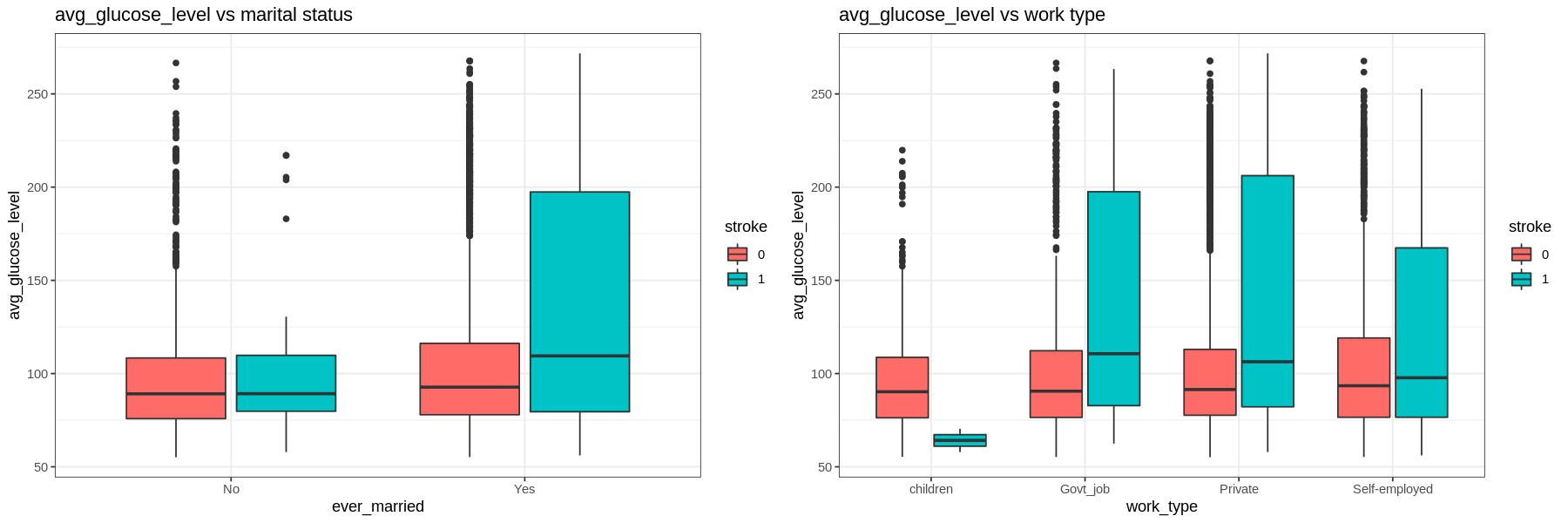

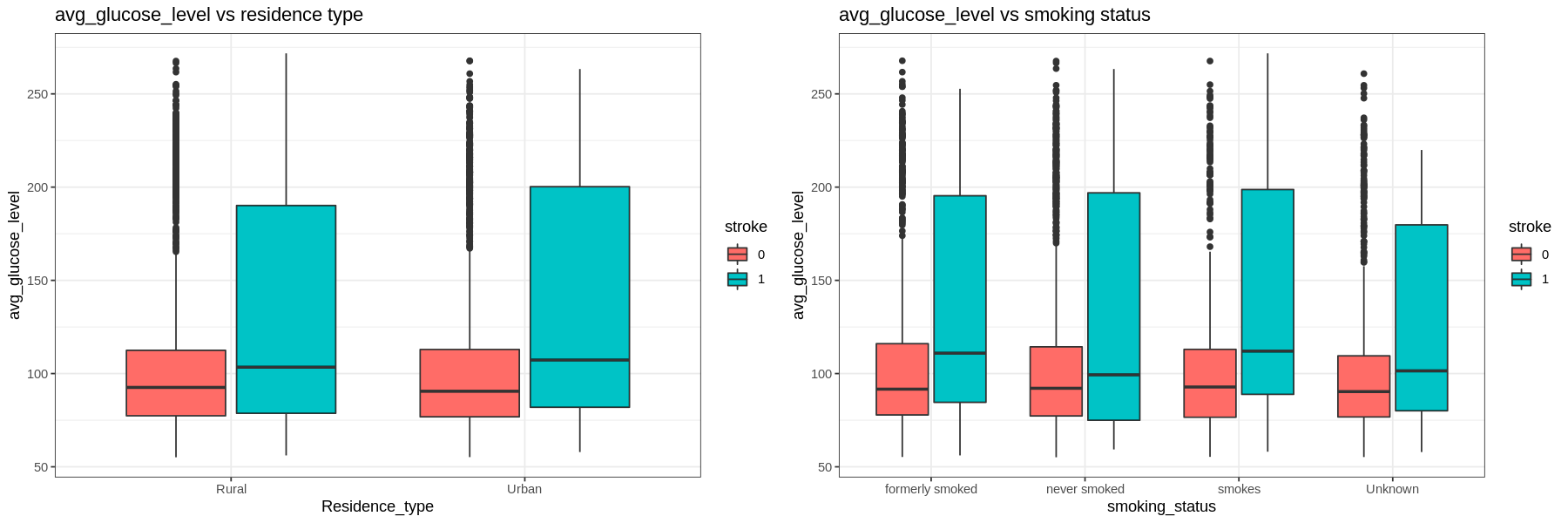

The average glucose level is right skewed.

- The IQR tends to go higher for those who had a stroke. Those with hypertension and heart disease have higher glucose levels regardless of having a stroke or not.

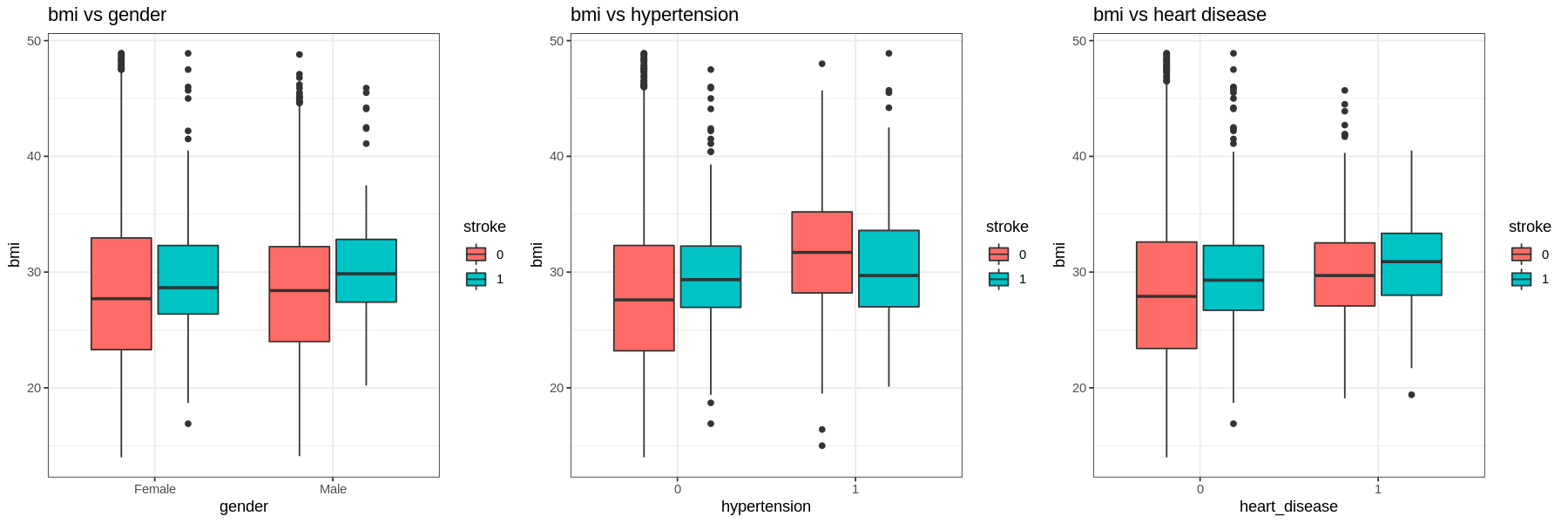

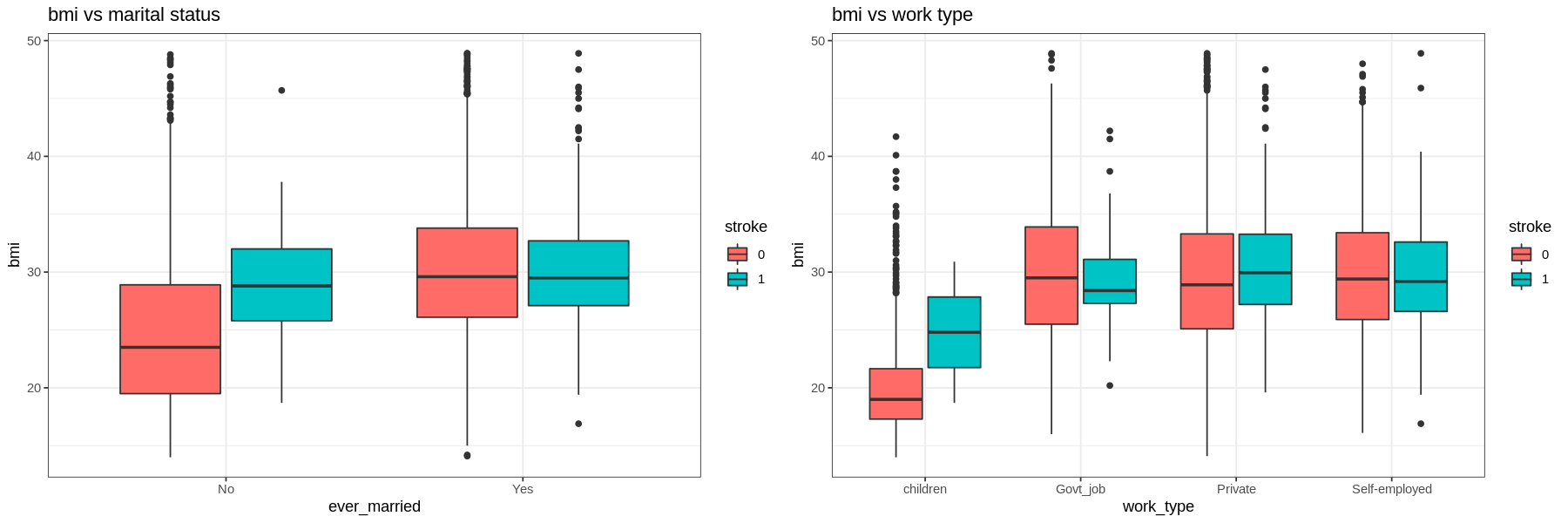

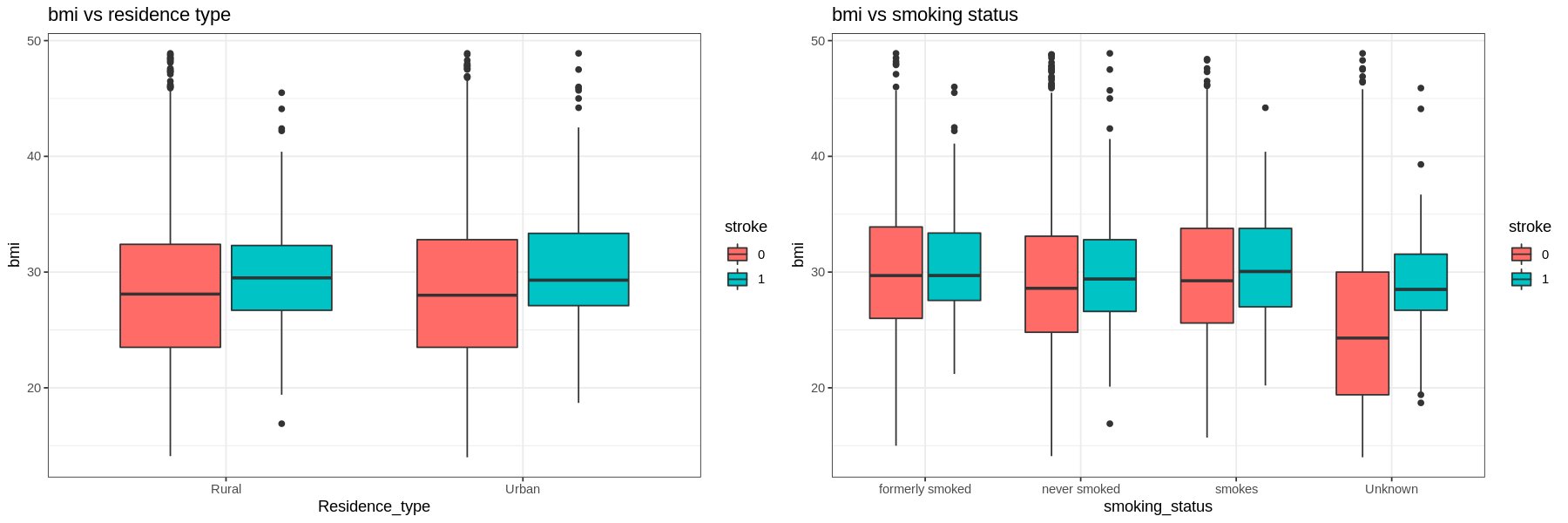

The graphs show that there is not much difference in the BMI of those who had a stroke and those who had not.

As age increases, the amount of strokes increases.

The distribution for average glucose level is bimodal for both stroke and no stroke populations, with peaks at the same values. However the density of strokes at higher glucose levels is higher than the density of no strokes at the same level.

There is no difference in the distribution of bmi between those who have had a stroke and those who have not..

4. Machine Learning

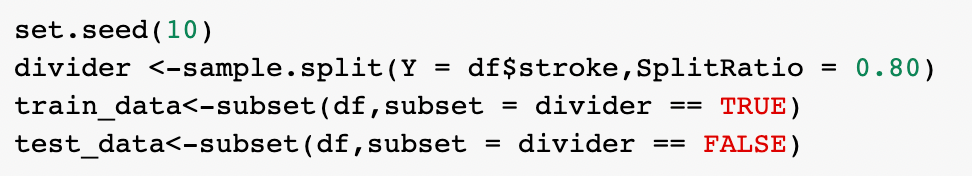

Split dataset to training and testing set by 80:20

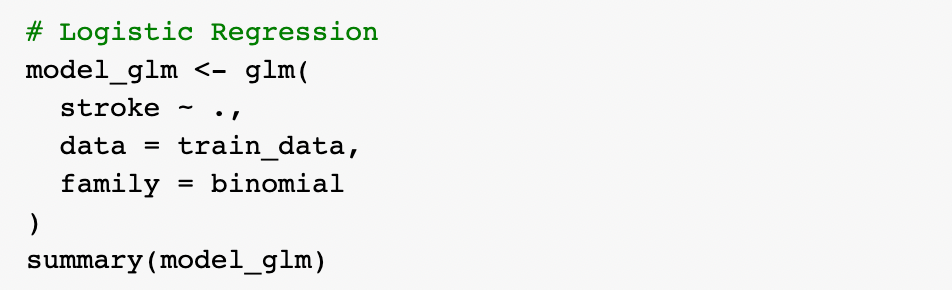

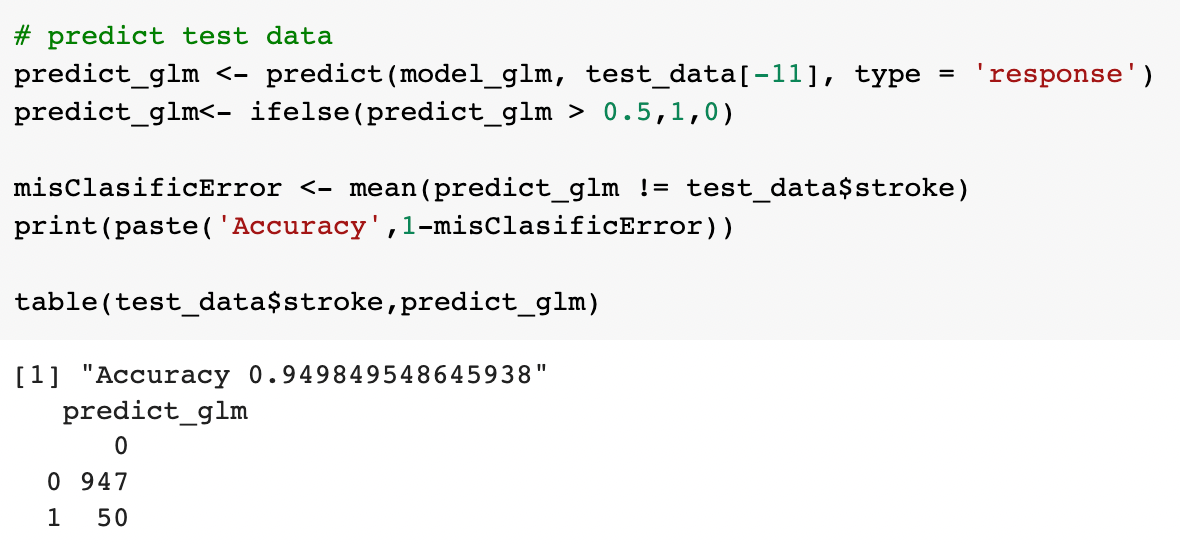

1) Logistic regression

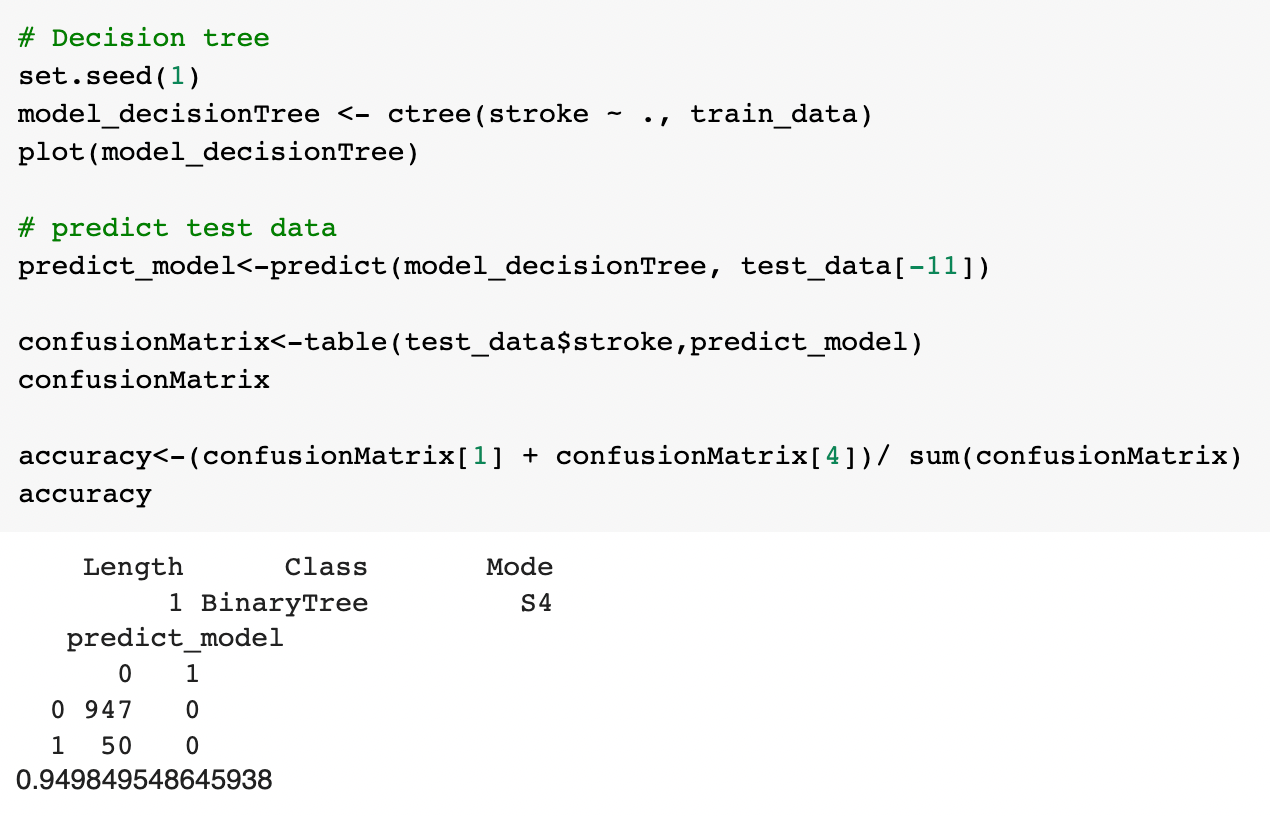

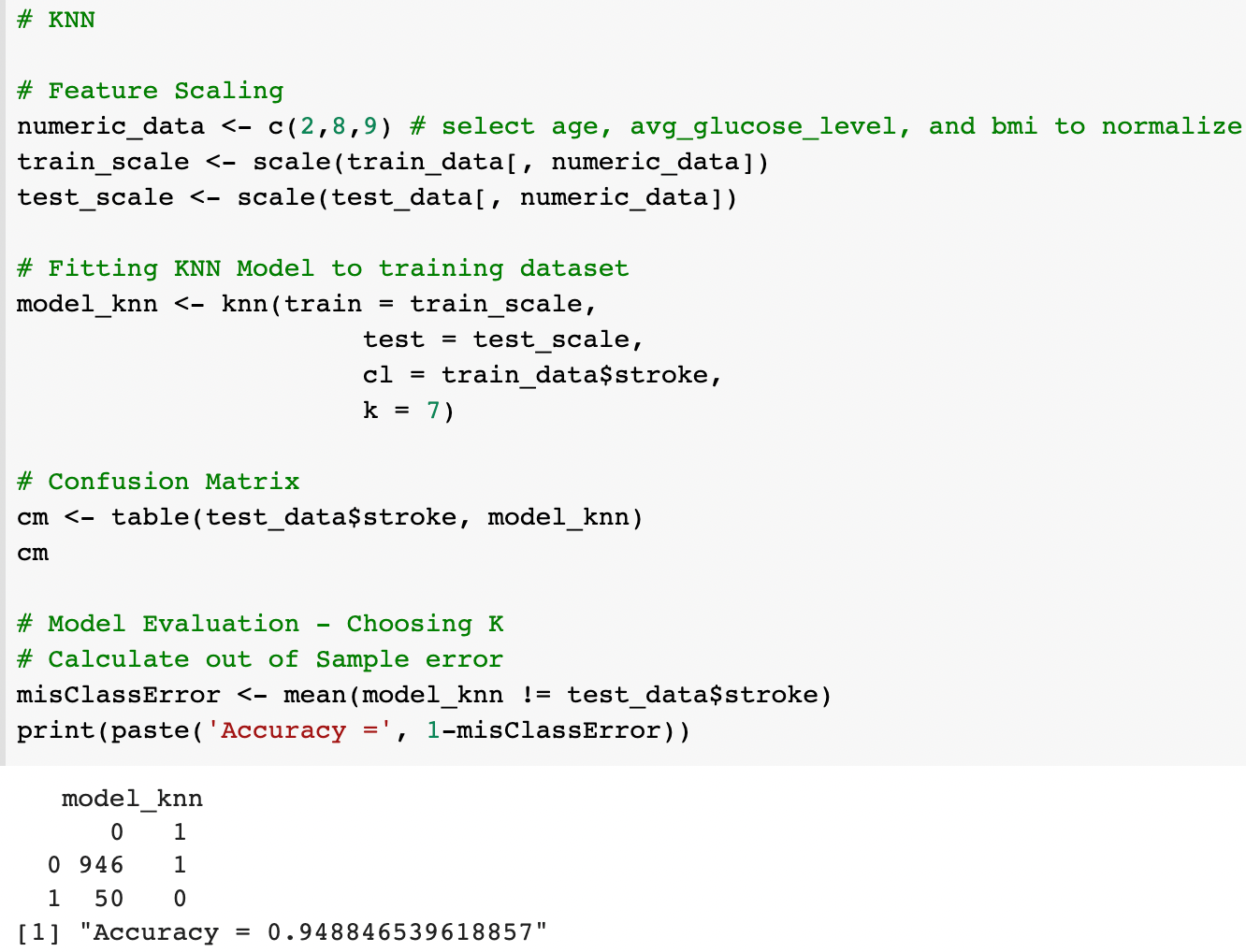

2) Decision tree

3) KNN

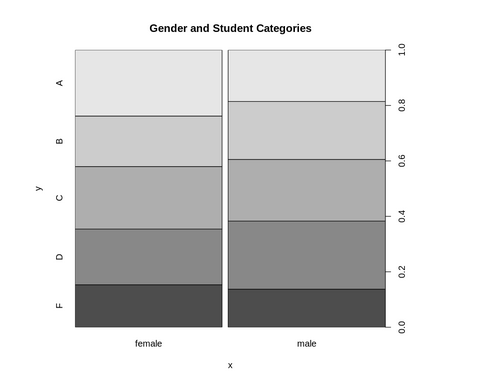

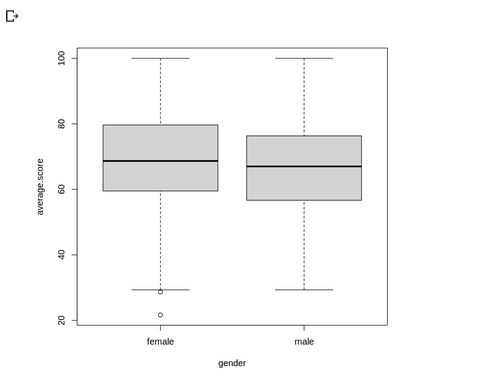

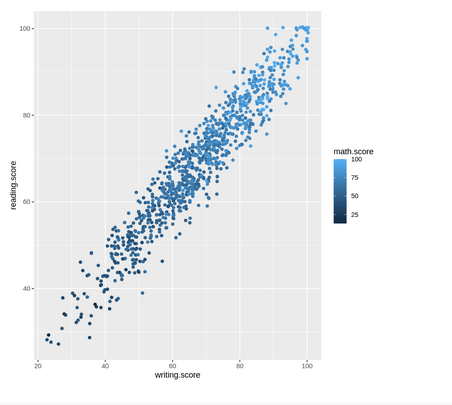

Group: HADA

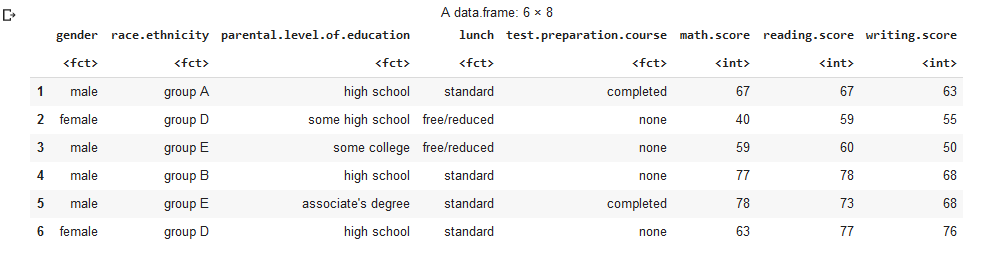

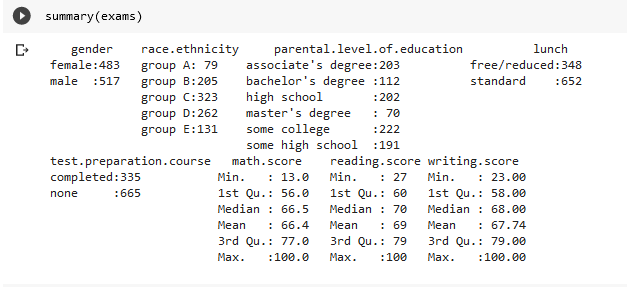

Dataset: exams

The data we working out are exams.csv that contains score data for completed tests.

Understanding the data:

From data exploration mode, we can define that :

• gender : gender of the student

• race.ethnicity : group the student ethnicity as Group A to group F

• parental.level.of.education : define the parent level of education of students

• lunch : The type of lunch take by the student

• test.preparation.course : The status of test prepartion course of the student

• math.score : The mathematic test score of the student

• reading.score : The reading test score of the student

• writing.score : The writing test score of the student

Data Cleansing & Analysis:

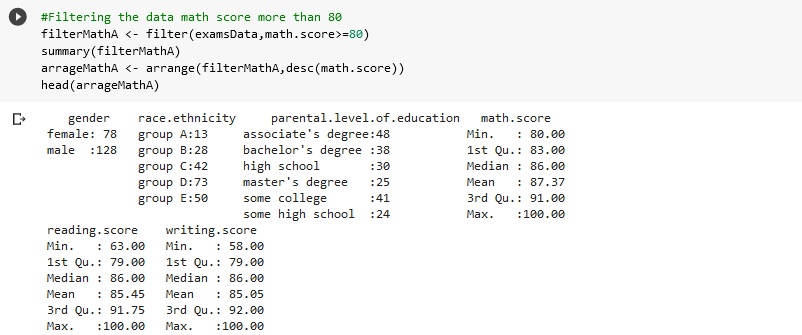

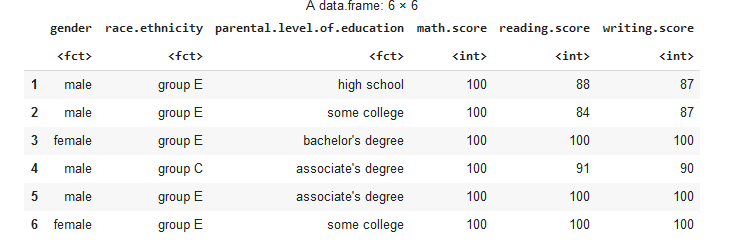

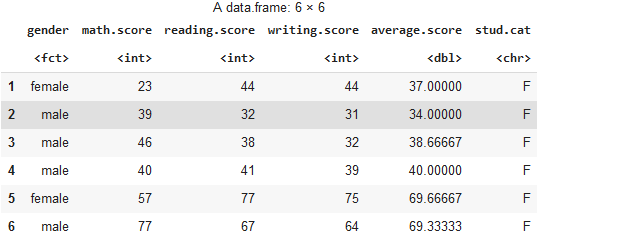

We filter the data for math.score that more than 80

next, we create new coloumn of average student and their categories based on average score:

Machine Learning:

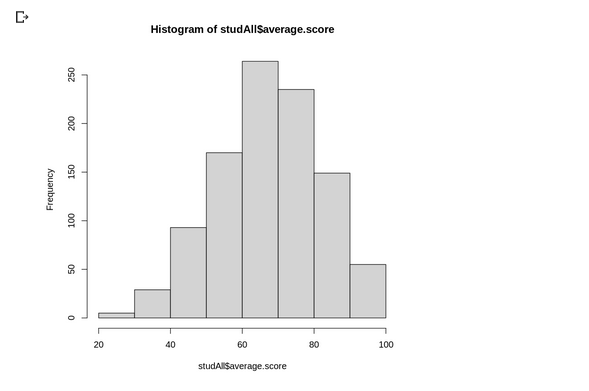

After analyze and explore the data we visualize the data :

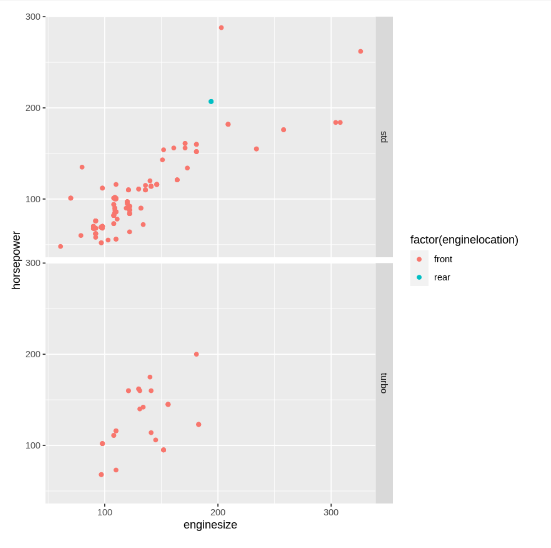

Group name: MOMO

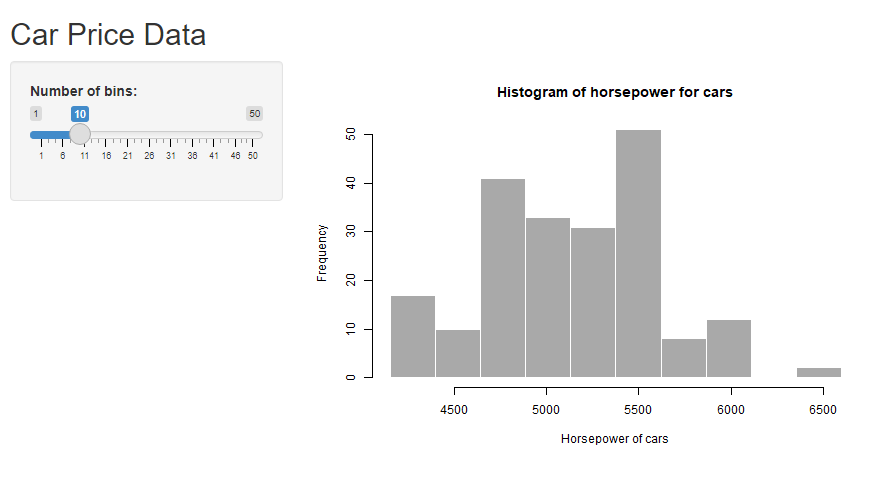

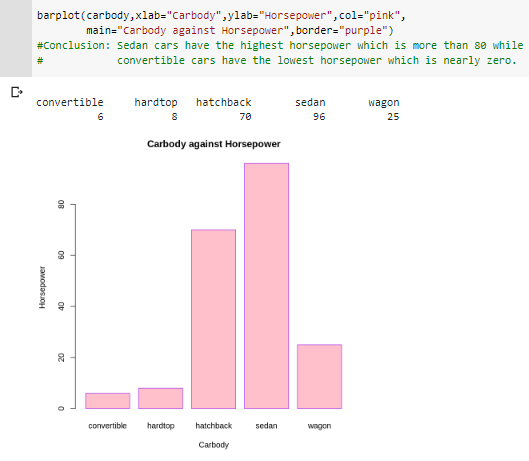

Our data is a large data set of different types of cars across the American market. We are required to model the price of cars with the available independent variables.

From the data we can see that the horsepower and engine size, positively affect the price of the car. As the horsepower and engine size increasing, the price of the car will also increase.

After that, for machine learning, we used the multiple linear regression because our data has more than 1 input and has a numeric output or target.

The above figure is our histogram chart that was created using R Shiny.

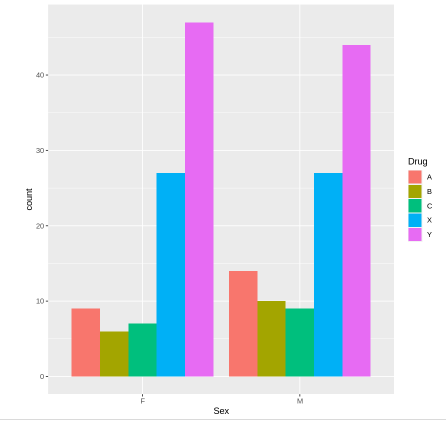

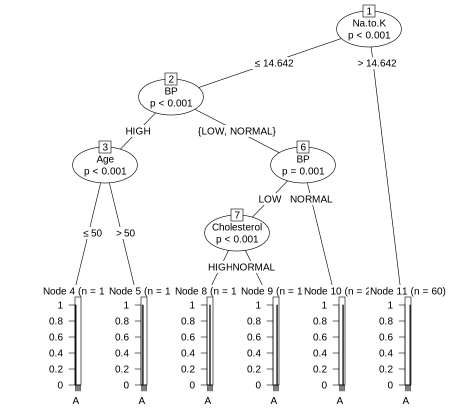

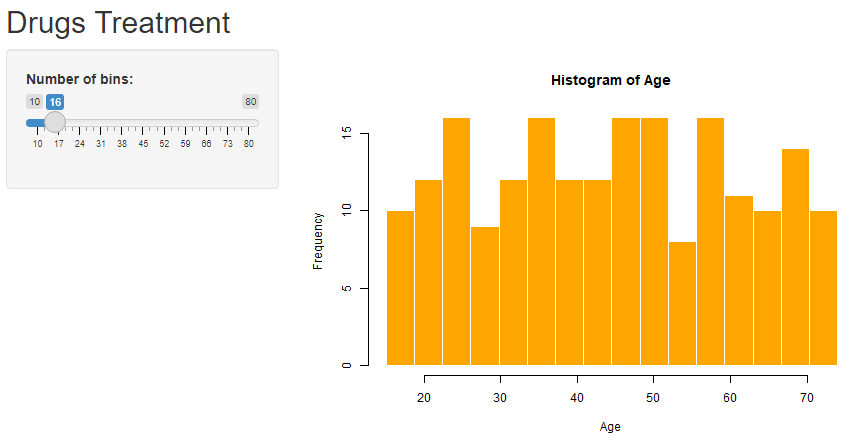

Our data is about the treaments using 5 types of drugs.

To analyse the data we employed ggplot2 packages that available in the R programming language. The data is used to find which drug is better for patient that have different level of blood pressure and cholesterol level.

The histogram below shows the relationship between the age and the types of drugs. Our finding is that the drugs have almost similiar proportions between sexes.

The ML algorithm used is supervised (Classification-Decision Tree) because the target is categorical type of dataset.

We can conclude that Drug A plays more important role compared to other drugs.

Here, we also plot a histogram for the patient's age used in this observation. The histogram is plotted using R shiny package.

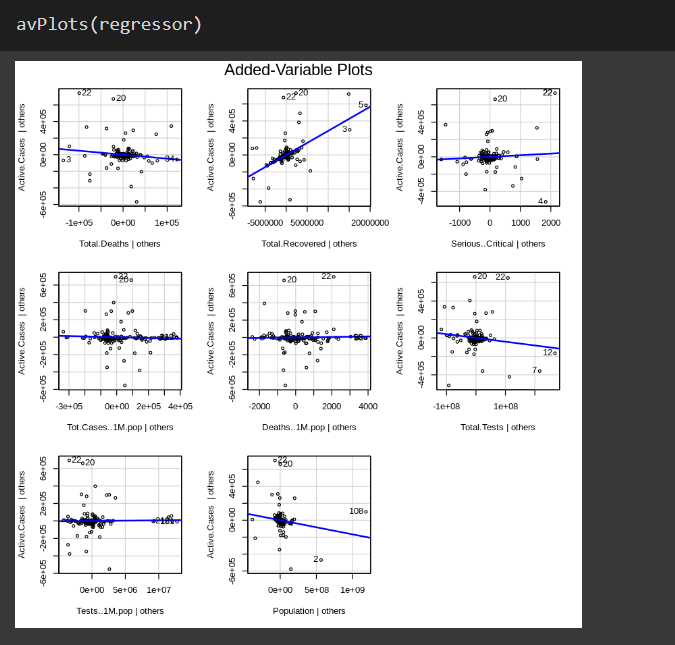

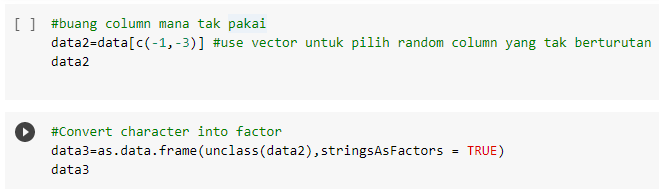

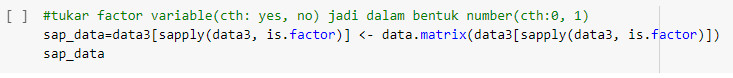

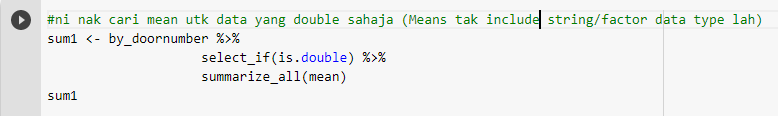

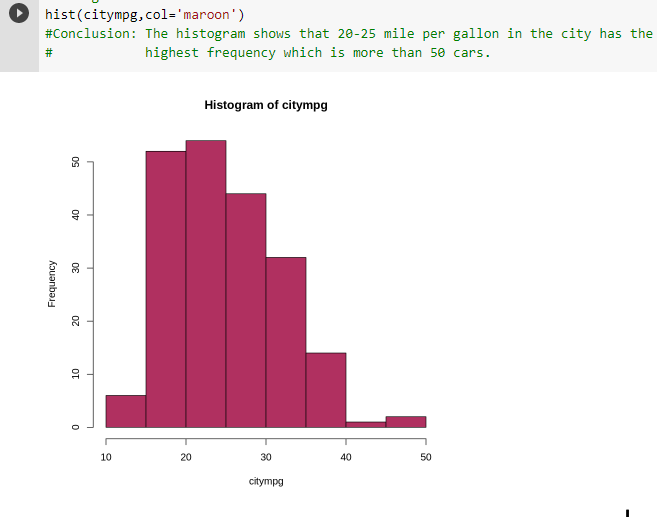

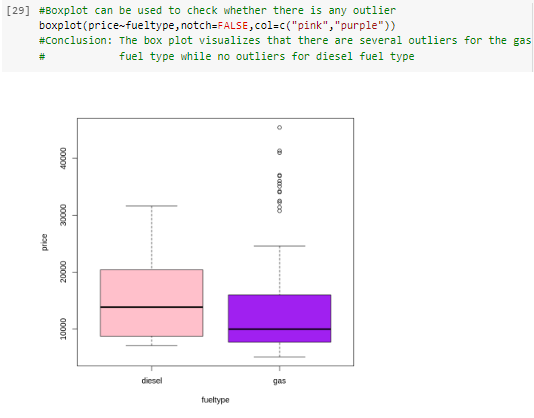

Hi to all. Here I want to share our experience and some finding of R Programming taught by our easy-to-understand trainer, @saranyaravikumar . Below is some of our coding:

Next is a few snapshots from our findings:

We were really grateful and enjoy our R Programming lessons! We hope we can retain the knowledge of what we have learnt for our future reference.